Monthly Archives: February 2013

Deduplication Internals – Content Aware deduplication : Part-3

Continuation to my previous part 1 and part 2 in this part we will discuss about the Content aware or Application Aware deduplication.

This type of deduplication is generally called a Byte level deduplication, because the deuplication for the information happens in the deepest level – that is BYTES.

Content aware technologies (also called byte level deduplication or delta-differencing deduplication ) work in a fundamentally different way. Key element of the content-aware approach is that it uses a higher level of abstraction when analyzing the data. Content-aware de-duplication looks at the data as objects. Unlike hash based dedupe, which try to find redundancies in block level, content-aware looks it as objects, comparing them to other objects. (e.g., Word document to Word document or Oracle database to Oracle database.)

In this, the deduplication engine sees the actual objects (files, database objects, application objects etc.) and divides data into larger segments (usually 8mb to 100mb in size). Then, typically by using knowledge of the content of the data (known as being “content-aware”), this technique finds segments that are similar and stores only the changed bytes between the objects. That is a BYTE level comparison is performed.

If we look in to detail, In this type before the dedupe process, an unique metadata is typically inserted within the data types,like database, email, photo, documents etc. Then during the dedupe process, this metadata for the data block is extracted and examined to understand what kind of data present in the block. Based on the type of data , a certain block size is assigned to that particular data, and the block size is optimized based on the type of data being backed up.

Since the block size is already optimized for that particular data type, CPU cycles don’t need to be wasted to determine the block boundaries. Compare to other deduplication techniques (hash based), where the data is blindly chopped to find the boundaries, that is to find the block length and then identify duplicate segments.

The below example will give more insight to this;

I have saved a photo, then open it and edited one pixel and save the new version as a new file, there won’t be a single duplicate block at the disk level. On the other hand, almost the entire file is a duplicate information. Can you find a duplicate graphic that was used in a Powerpoint, a Word document, and a PDF? Powerpoint and Word both compress with a variant of zip. Even if the graphic is identical, block level dedupe won’t find the duplicate graphics because they are not stored identically on disk. You need something that can find duplicate data at the information level, the answer is – Content Aware or Application Aware dedupe solutions.

This approach provides a good balance between performance and resource utilization.

CommVault Simpana, Symantec Backup exec, Symantec netbackup, Dell DR4000, Sepaton’s DeltaStor, Exagrid are the solutions which use this technology.

VMware ESXi network driver install and upgrade

Recently I have to perform a network driver update in the ESXi host, it is very nice to do this. Here I am updating the Ethernet card driver for ESXi 5. This process can be used for updating any driver in the ESXi & ESX host.

VMware uses a file package called a VIB (VMware Installation Bundle) as the mechanism for installing or upgrading software packages, drivers on an ESX server.

The file may be installed directly on an ESX server from the command line or we can deploy using VMware update manager. Here I am going to mention about the command line method of doing the upgrade.

Install or update a patch/driver on the host using these esxcli commands:

COMMAND LINE INSTALLATION

New Installation

For new installs, you should perform the following steps, Issue the following command (full path to the file must be specified):

esxcli software vib install -v {VIBFILE} or esxcli software vib install -d {OFFLINE_BUNDLE}

Upgrade Installation

The upgrade process is similar to a new install, except the command that should be issued is the following:

esxcli software vib update -v {VIBFILE} or esxcli software vib update -d {OFFLINE_BUNDLE}

Notes:

- To install or update a .zip file, use the -d option. To install or update a .vib file use the -v option.

- The install method has the possibility of overwriting existing drivers. If you are using 3rd party ESXi images, VMware recommends using the update method to prevent an unbootable state.

- Depending on the certificate used to sign the VIB, you may need to change the host acceptance level. To do this, use the following command: esxcli software acceptance set –level=<level>

Usage: esxcli software acceptance set [cmd options]

Description:

Set (Sets the host acceptance level. This controls what VIBs will be allowed on a host.)

Cmd options:

–level=< VMwareCertified / VMwareAccepted / PartnerSupported / CommunitySupported >

(Specifies the acceptance level to set. Should be one of VMwareCertified / VMwareAccepted / PartnerSupported / CommunitySupported.)

STEPS

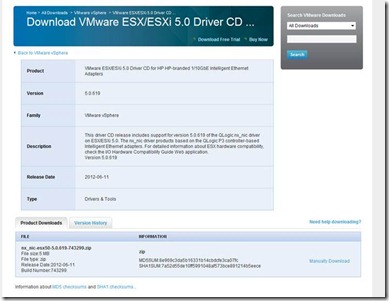

1, Download the corresponding driver from the VMware site, like given below.

https://my.vmware.com/web/vmware/details?downloadGroup=DT-ESXI50-Qlogic-nx_nic-50619&productId=229

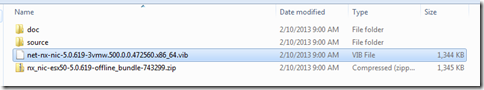

2, Extract the nx_nic-esx50-5.0.619-743299.zip file and there you can see a file net-nx-nic-5.0.619-3vmw.500.0.0.472560.x86_64.vib which contains the Driver. And there is a ZIP file nx_nic-esx50-5.0.619-offline_bundle-743299.zip which is an offline bundle, this also contains the driver and we can also use this for the driver installation.

3, Migrate or power off the virtual machines running on the host and put the host into maintenance mode. The host can be put into maintenance mode from the command line with:

ESXi: # vim-cmd hostsvc/maintenance_mode_enter

ESX: # vimsh -n -e hostsvc/maintenance_mode_enter

Exit maintenance mode using the vimsh or vim-cmd command

ESXi: # vim-cmd /hostsvc/maintenance_mode_exit

ESX: # vimsh -n -e /hostsvc/maintenance_mode_exit

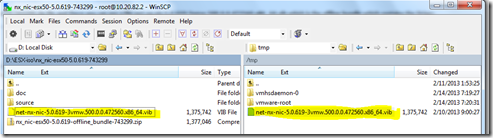

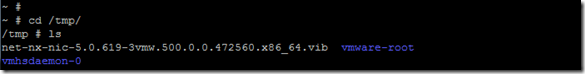

4, Upload the VIB file or offline bundle to the ESXi location /tmp via SCP

5, Then open SSH shell for ESxi and go to the directory and check for the uploaded file.

cd /tmp

6, To install the Driver give the command esxcli software vib install -v /tmp/net-nx-nic-5.0.619-3vmw.500.0.0.472560.x86_64.vib

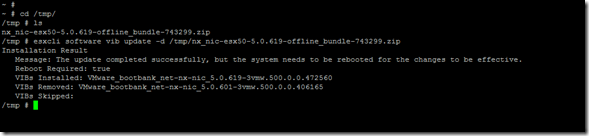

To upgrade the drivers – esxcli software vib update -d /tmp/nx_nic-esx50-5.0.619-offline_bundle-743299.zip

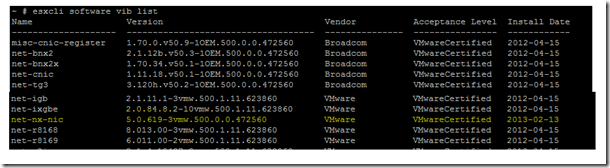

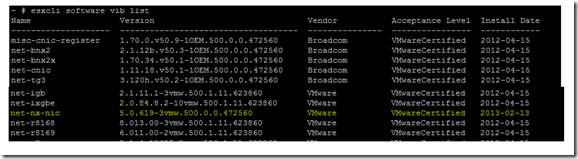

7, Verify that the VIBs are installed on your ESXi host:

# esxcli software vib list

8, Then reboot the ESXi host.

NOTES

if you didn’t give full path of the VIB file it will show the below error.

If the VIB is already installed, you can see the below;

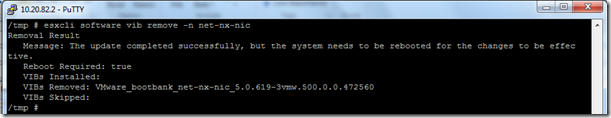

To remove a VIB, search the installed VIB by the command esxcli software vib list

Once we find the correct VIB name give the command esxcli software vib remove -n net-nx-nic

And reboot the ESXi server.

Deduplication Internals – Hash based deduplication : Part-2

Now in the second part we discuss about the process or technique used to do the deduplication.

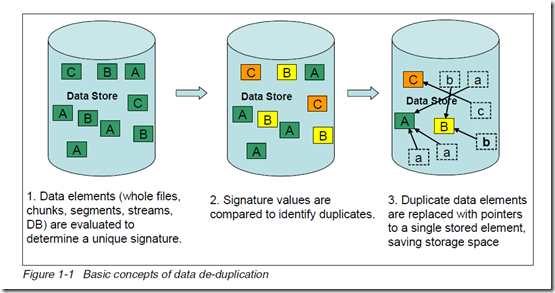

Different data de-duplication products use different methods of breaking up the data into elements or chunks or blocks, but each product uses some technique to create a signature or identifier or fingerprint for each data element. As shown in the below figure, the data store contains the three unique data elements A, B, and C with a distinct signature. These data element signature values are compared to identify duplicate data. After the duplicate data is identified, one copy of each element is retained, pointers are created for the duplicate items, and the duplicate items are not stored.

The basic concepts of data de-duplication are illustrated in below.

Based on the Technologies, or how how it is done. Two methods frequently used used for de-duplicating data are hash based and content aware.

1 – Hash based Deduplication

Hash based data de-duplication methods use a hashing algorithm to identify “chunks” of data. Commonly used algorithms are Secure Hash Algorithm 1 (SHA-1) and Message-Digest

Algorithm 5 (MD5). When data is processed by a hashing algorithm, a hash is created that represents the data. A hash is a bit string (128 bits for MD5 and 160 bits for SHA-1) that

represents the data processed. If you processed the same data through the hashing algorithm multiple times, the same hash is created each time.

Here are some examples of hash codes:

MD5 – 16 byte long hash

– # echo “The Quick Brown Fox Jumps Over the Lazy Dog” | md5sum

9d56076597de1aeb532727f7f681bcb0

– # echo “The Quick Brown Fox Dumps Over the Lazy Dog” | md5sum

5800fccb352352308b02d442170b039d

SHA-1 – 20 byte long hash

– # echo “The Quick Brown Fox Jumps Over the Lazy Dog” | sha1sum

F68f38ee07e310fd263c9c491273d81963fbff35

– # echo “The Quick Brown Fox Dumps Over the Lazy Dog” | sha1sum

d4e6aa9ab83076e8b8a21930cc1fb8b5e5ba2335

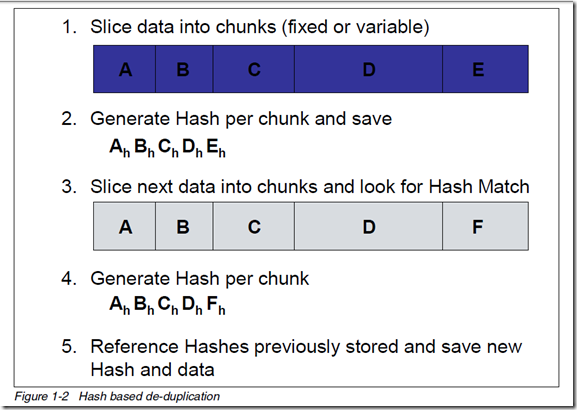

Hash based de-duplication breaks data into “chunks”, either fixed or variable length, and processes the “chunk” with the hashing algorithm to create a hash. If the hash already exists, the data is deemed to be a duplicate and is not stored. If the hash does not exist, then the data is stored and the hash index is updated with the new hash.

In Figure 1-2, data “chunks” A, B, C, D, and E are processed by the hash algorithm and creates hashes Ah, Bh, Ch, Dh, and Eh; for purposes of this example, we assume this is all new data.

Later, “chunks” A, B, C, D, and F are processed. F generates a new hash Fh. Since A, B, C, and D generated the same hash, the data is presumed to be the same data, so it is not stored again. Since F generates a new hash, the new hash and new data are stored.

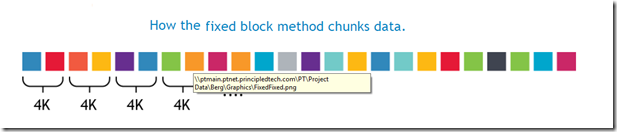

A – Fixed-Length or Fixed Block

In this data deduplication algorithm, it breaks the Data in to chunks or block, and the block size or block boundaries is Fixed like 4KB, or 8KB etc. And the block size never changes. While different devices/solutions may use different block sizes, the block size for a given device/solution using this method remains constant.

The device/solution always calculates a fingerprint or signature on a fixed block and sees if there is a match. After a block is processed, it advances by exactly the same size and take another block and the process repeats.

Advantages

Requires the minimum CPU overhead, and fast and simple

Disadvantages

Because the block size or block boundaries is Fixed, the main limitation of this approach is that when the data inside a file is shifted, for example, when adding a slide to a Microsoft PowerPoint deck, all subsequent blocks in the file will be rewritten and are likely to be considered as different from those in the original file. Smaller block size give better deduplication than large ones, but it takes more processing to deduplicate. Larger block size give low depulication, but it takes less processing to deduplicate.

So the Bottom line is Less storage savings and not efficient.

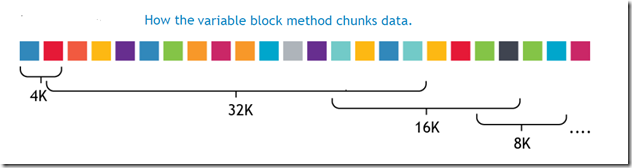

B – Variable-Length or Variable Block

In this data deduplication algorithm, it breaks the Data in to chunks or block, and the block size or block boundaries is variable like 4KB, or 8KB or 16KB etc. And the block size changes dynamically during the entire process. The device/solution always calculates a fingerprint or signature on a variable block size and sees if there is a match. After a block is processed, it advances by taking another block size and take another blocks and the process repeats.

Advantages

Higher deduplication ratio, high storage space savings.

Disadvantages

While the variable block deduplication may yield slightly better deduplication than the fixed block deduplication approach, it does require you to pay a price. The price being the CPU cycles that must be spent in trying to determine the file boundaries. The variable block approach requires more processing than fixed block because the whole file must be scanned, one byte at a time, to identify block boundaries.

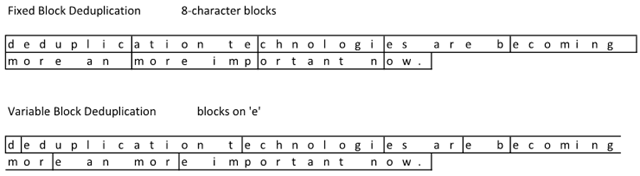

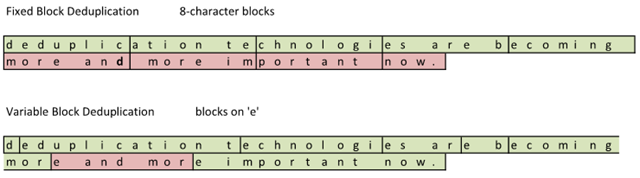

Check out the following example based on the following sentence will explain in detail: “deduplication technologies are becoming more an more important now.”

Notice how the variable block deduplication has some funky block sizes. While this does not look too efficient compared to fixed block, check out what happens when I make a correction to the sentence. Oops… it looks like I used ‘an’ when it should have been ‘and’. Time to change the file: “deduplication technologies are becoming more and more important now.” File –> Save

After the file was changed and deduplicated, this is what the storage subsystem saw:

The red sections represent the changed blocks that have changed. By adding a single character in the sentence, a ‘d’, the sentence length shifted and more blocks suddenly changed. The Fixed Block solution saw 4 out of 9 blocks changed. The Variable Block solution saw 1 out of 9 blocks changed.

Variable block deduplication ends up providing a higher storage density and good storage space savings.

2 – Content Aware or application-aware Deduplication

My next blog will be about the content aware dedupe.

Deduplication Internals : Part-1

Deduplication is one of the hottest technologies in the current market because of its ability to reduce costs. But it comes in many flavours and organizations need to understand each one of them if they are to choose the one that is best for them. Deduplication can be applied to data in primary storage, backup storage, cloud storage or data in flight for replication, such as LAN and WAN transfers. So eventually it offers the below benefits;

– Allow to substantially save disk space, reduce storage requirements and Less hardware

– Improve bandwidth efficiency,

– Improve replication speed,

– Reduce Backup window and improve RTO and RPO objectives,

– and finally COST.

What is data deduplication?

This concept is a familiar one which we see daily, a URL is a type of pointer; when someone shares a video on YouTube, they send the URL for the video instead of the video itself. There’s only one copy of the video, but it’s available to everyone. Deduplication uses this concept in a more sophisticated, automated way.

Data deduplication is a technique to reduce storage needs by eliminating redundant or duplicate data in your storage environment. Only one and unique copy of the data is retained on storage media, and redundant or duplicate data is replaced with a pointer to the unique data copy.

That is, It looks at the data on a sub-file (i.e.block) level, and attempts to determine if it’s seen the data before. If it hasn’t, it stores it. If it has seen it before, it ensures that it is stored only once, and all other references to that duplicate data are merely pointers.

How data deduplication works?

Dedupe technology typically divides data in to smaller chunks/blocks and uses algorithms to assign each data chunk a unique hash identifier called a fingerprint to each chunks/blocks. To create the fingerprint, it uses an algorithm that computes a cryptographic hash value from the data chunks/blocks, regardless of the data type. These fingerprints are stored in an index.

The deduplication algorithm compares the fingerprints of data chunk/block to those already in the index. If the fingerprint exists in the index, the data chunk/block is replaced with a pointer to data chunk/block. If the fingerprint does not exist, the data is written to the disk as a new unique data chunk.

Different types of de-duplication – There are many types and broad classification of dedupe methods; they are

1- Based on the Technologies, how it is done.

Fixed-Length or Fixed Block Deduplication

Variable-Length or Variable Block Deduplication

Content Aware or application-aware deduplication

2- Based on the Process, or when it is done.

In-line (or as I like to call it, synchronous) de-duplication

Post-process (or as I like to call it, asynchronous) de-duplication

3- Based on the Type, or where it happens.

Source or Client side Deduplication

Target Deduplication

My next post will discuss, in detail about these dedupe technologies and process.

![clip_image001[4] clip_image001[4]](https://pibytes.files.wordpress.com/2013/02/clip_image0014_thumb.png?w=587&h=104)

![clip_image001[10] clip_image001[10]](https://pibytes.files.wordpress.com/2013/02/clip_image00110_thumb.png?w=586&h=116)

![clip_image002[8] clip_image002[8]](https://pibytes.files.wordpress.com/2013/02/clip_image0028_thumb.png?w=609&h=100)